In The News

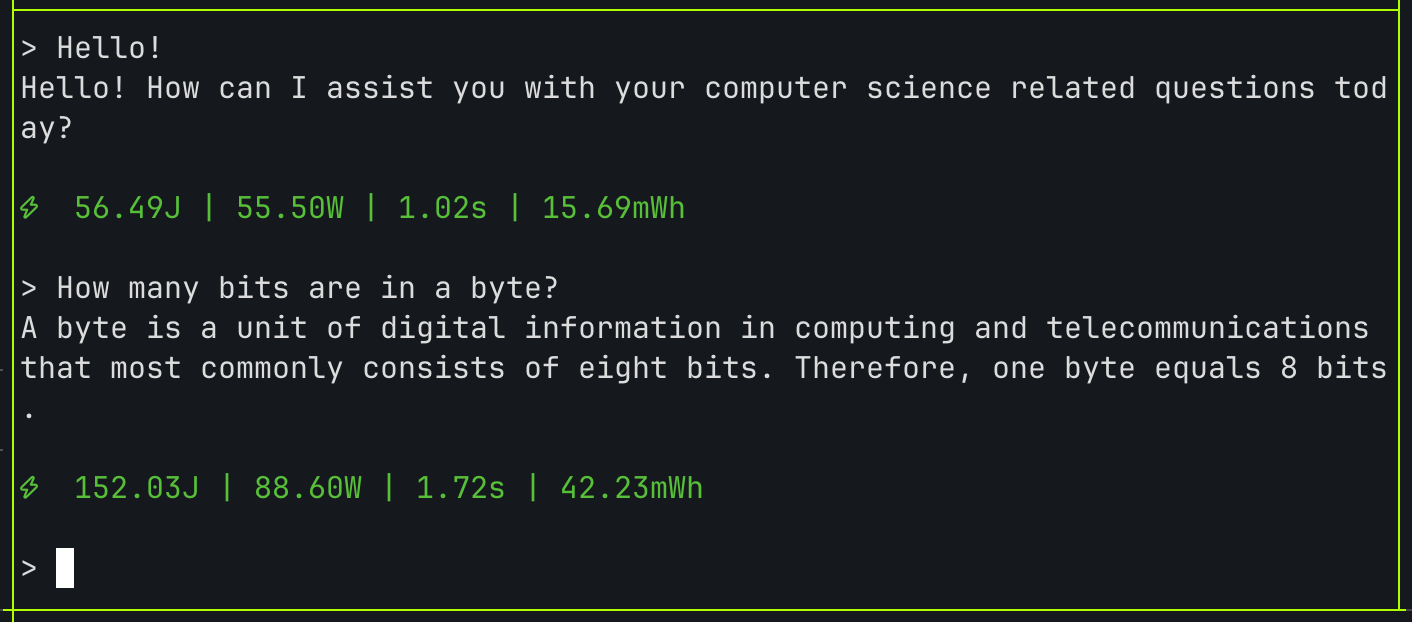

Today, Neuralwatt is introducing Allowance — budget controls built directly into the platform, that give every API key its own spending limit, so teams have visibility and control before runaway consumption becomes an interruption. Neuralwatt can already increase throughput by 33 percent; Allowance extends that progress by bringing the same transparency and control to AI budgets that Neuralwatt has always brought to energy usage.

AI Without Guardrails

AI spending is growing faster than most teams can plan for, yet while the industry has invested heavily in making AI more capable, it has invested far less in making it more manageable. Global AI spend is on track to hit $2.53 trillion in 2026 (up 44 percent year over year) with 86 percent of organizations claiming their budgets will increase even further. Yet despite steep investment, most teams still have no real-time visibility into what they are actually consuming.

But the financial impact is only part of what is at stake. When a workflow hits its limit, critical work grinds to a halt. For teams that have built AI into their core operations, that interruption is not just a minor inconvenience, it is a productivity failure. Cost overruns and productivity loss are symptoms of the same underlying problem, and teams simply do not have the visibility they need to stay ahead of either.

"Compute doesn't exist without energy, and cost shouldn't exist without visibility. So, AI without budget controls is nothing but a blank check,” said Chad Gibson, co-founder and CEO of Neuralwatt. “Transparency is foundational to everything we build at Neuralwatt, and in an industry where AI consumption has been largely opaque, we’re providing that clarity."

Smarter Inference Without Overspend

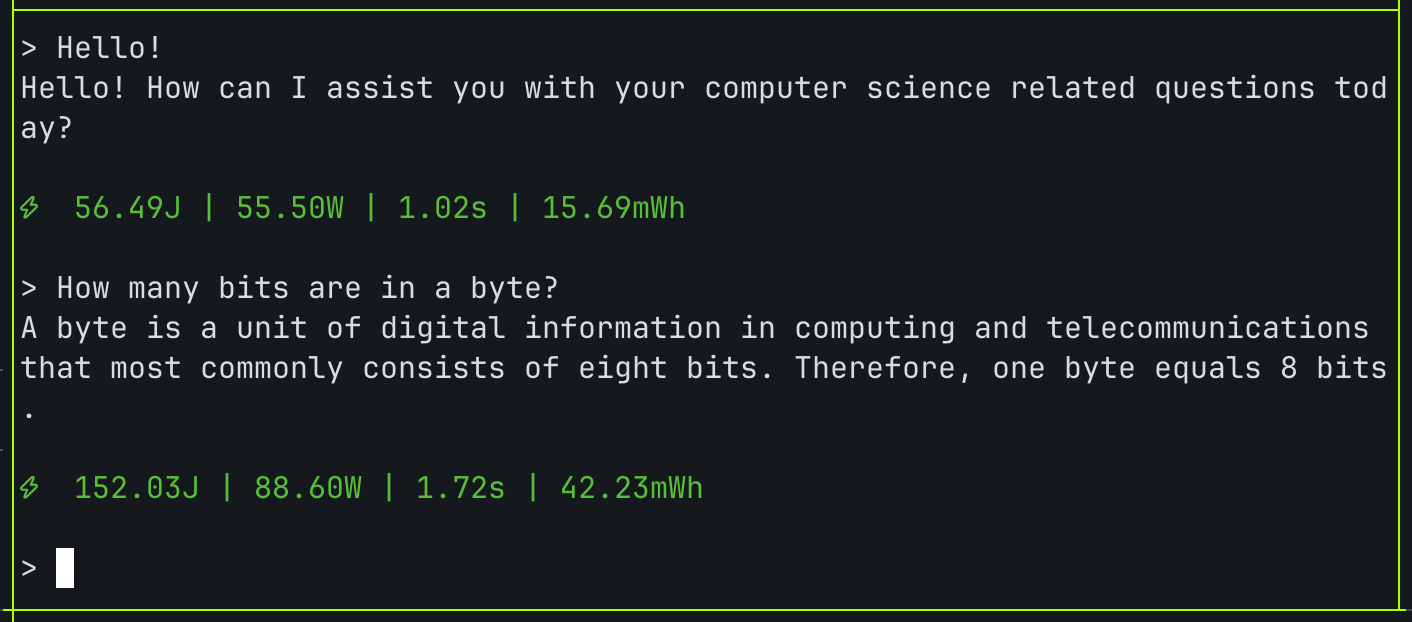

Neuralwatt Allowance was built to put control back into the hands of the teams running the workloads. Every API key gets its own spending limit — daily, weekly, or monthly — and every response includes real-time pricing headers so teams can see exactly what each request costs, as it happens. Agents become budget-aware, reporting progress and flagging when they are approaching their limit, rather than hitting a wall without warning.

For agentic workflows specifically, per-session limits give teams an additional layer of control, capping what a single agent can spend without affecting other sessions running in parallel. And because Allowance sends email notifications at 80% of a key's limit, teams receive a warning before consumption hits its limit. Allowance is native to the Neuralwatt platform, and is included in every subscription, active from day one, with no additional setup required.

Neuralwatt was founded on the conviction that AI infrastructure should be transparent by design. Allowance builds on that foundation, ensuring gains from optimization aren’t eroded by unplanned spending, and that capacity limits are never the reason great work doesn't get done.

Neuralwatt, the leader in AI power optimization, today released findings from joint testing with ZutaCore, a pioneer in waterless direct-to-chip liquid cooling, showing that AI data centers can operate at 45°C warm-water temperatures on current-generation B200 hardware, effectively eliminating the need for energy-intensive mechanical cooling without sacrificing a single token of inference performance.

In what is one of the first combined compute and cooling optimization testing efforts in the industry, Neuralwatt and ZutaCore ran nearly 500 paired experiments on current-generation NVIDIA B200 hardware under real-world, high-intensity AI inference workloads. The results prove that current-generation hardware can operate at the warm-water temperatures the industry is moving toward.

The 45°C era is already here

At CES in January, Jensen Huang announced that NVIDIA's next-generation Vera Rubin platform is designed to operate with 45°C inlet water — warm enough to eliminate chillers in most climates and significantly reduce energy consumption and water use. The industry took note, but left many wondering whether the operating point is viable today, on hardware that is already running, or if it is something to prepare for in the next hardware cycle.

Results from Neuralwatt and ZutaCore testing confirm that 45°C warm-water operation is viable today, on hardware operators are already running. Across nearly 500 paired experiments at temperatures from 28°C to 46°C, the combined Neuralwatt and ZutaCore stack delivered:

- Zero thermal throttling across the entire tested range. ZutaCore's cooling keeps B200 GPUs within safe thermal limits at warm-water temperatures most operators have not attempted. The system does not throttle until 49°C, well above the 45°C industry target, giving operators meaningful room to operate.

- No performance penalty. With ZutaCore cooling and Neuralwatt's optimization agent working together, inference throughput at warm operating temperatures is statistically indistinguishable from the coolest tested point. Running hotter does not mean running slower.

With ZutaCore's cooling as the foundation, Neuralwatt's optimization agent delivered:

- Roughly triple the thermal buffer at 45°C compared to running without optimization. GPU memory temperatures stay approximately 6°C further from the throttle boundary, meaning the system is not just stable at 45°C, it has meaningful headroom to spare.

- An 18-19% reduction in server power consumption across every temperature tested, roughly 10x more impactful than any savings achievable through cooling adjustments alone. Because less server power means less heat to remove, those savings extend beyond the servers themselves. At typical operating efficiency, every 8-GPU system produces an estimated 6.3kW of total facility energy savings

- A 3x improvement in power stability, with variability dropping from 4.2% to 1.2%. AI workloads run more consistently, capacity planning becomes more reliable, and delivering on performance commitments gets meaningfully easier.

- Between 1.5°C and 7°C of effective thermal reduction depending on the workload, achieved through software optimization alone. This extends the safe operating range without any changes to physical infrastructure, giving operators more room to push hardware harder."

The path forward

AI is growing faster than the infrastructure built to support it. Global AI computing capacity has surged 3.3x per year, and power and thermal constraints are increasingly limiting how far organizations can scale. But the combined ZutaCore and Neuralwatt stack gives operators a concrete path to more compute, lower costs, and a smaller energy footprint without new hardware, new construction, or waiting on the next GPU generation.

Additional trials are underway, and the co-optimization opportunities between ZutaCore's cooling control and Neuralwatt's workload-aware agent represent a path to further gains.

The constraint on AI growth is no longer just compute, it is energy, thermal capacity, and the infrastructure built to support both. The industry has historically treated those as fixed constraints, but Neuralwatt and ZutaCore are proving that they are not.

About Neuralwatt

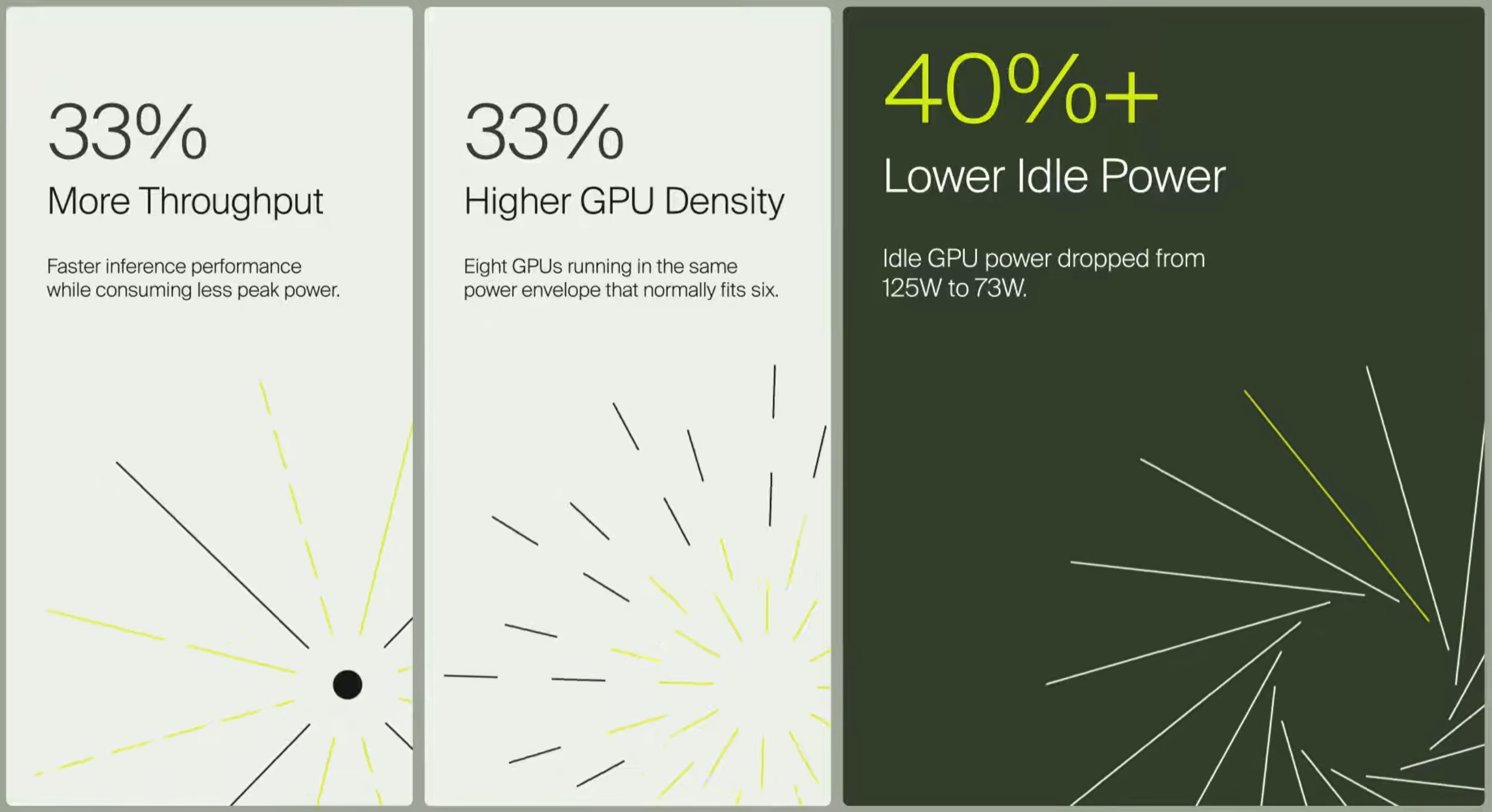

Neuralwatt is AI power optimization software that sits alongside existing infrastructure, measuring and optimizing energy at the workload level. By making every watt visible, measurable, and actionable, Neuralwatt helps organizations increase capacity, reduce costs, and lower carbon emissions — without new hardware or infrastructure changes. In production environments, customers have achieved 33 percent more compute from their existing power envelopes.

About ZutaCore

ZutaCore is a leader in waterless, direct-to-chip liquid cooling for AI and high-performance computing. Powered by its patented two-phase HyperCool technology, ZutaCore removes heat directly at the processor through a sealed, closed-loop system — eliminating water use, reducing cooling energy by up to 82%, and enabling significantly higher compute density.

For more information on the testing methodology and full results, contact meaghan@neuralwatt.com

Yesterday, we joined the OpenClaw community for ClawShop, the largest virtual OpenClaw event to date, hosted by our friends at Kilo. Events like ClawShop put us in front of the builders who are pushing AI agent workflows forward, and we left feeling energized about the future and who we're building for.

How to integrate Neuralwatt with your existing tools

Neuralwatt and GreenPT today announced a strategic partnership to set a new global standard for sustainable AI inference. The collaboration is designed as a direct response to this gap; introducing infrastructure where energy usage is measurable, transparent, and tied to how AI is actually consumed.

Neuralwatt launched Neuralwatt Cloud, a hosted inference platform with the industry’s first energy-based pricing model, designed to boost compute capacity and reduce energy costs without new infrastructure.

Want to increase your AI server density without sacrificing performance? Discover how Neuralwatt used Crusoe Cloud to solve the "AI energy paradox" and prove their innovative power management software. By leveraging our NVIDIA HGX H100 GPU clusters in Iceland — powered by 100% clean geothermal and hydro energy — Neuralwatt gained the unrestricted NVIDIA SMI access they needed to drive deep hardware-level telemetry. The results of this breakthrough:33% increase in AI inference throughput33% better server density (8 GPUs in a 6-GPU power envelope)40%+ reduction in idle GPU power draw (from 125W to 73W)"On Crusoe, we’ve had everything we needed… The ease of access to the NVIDIA hardware has been instrumental," says Chad Gibson, Co-founder and CEO of Neuralwatt.

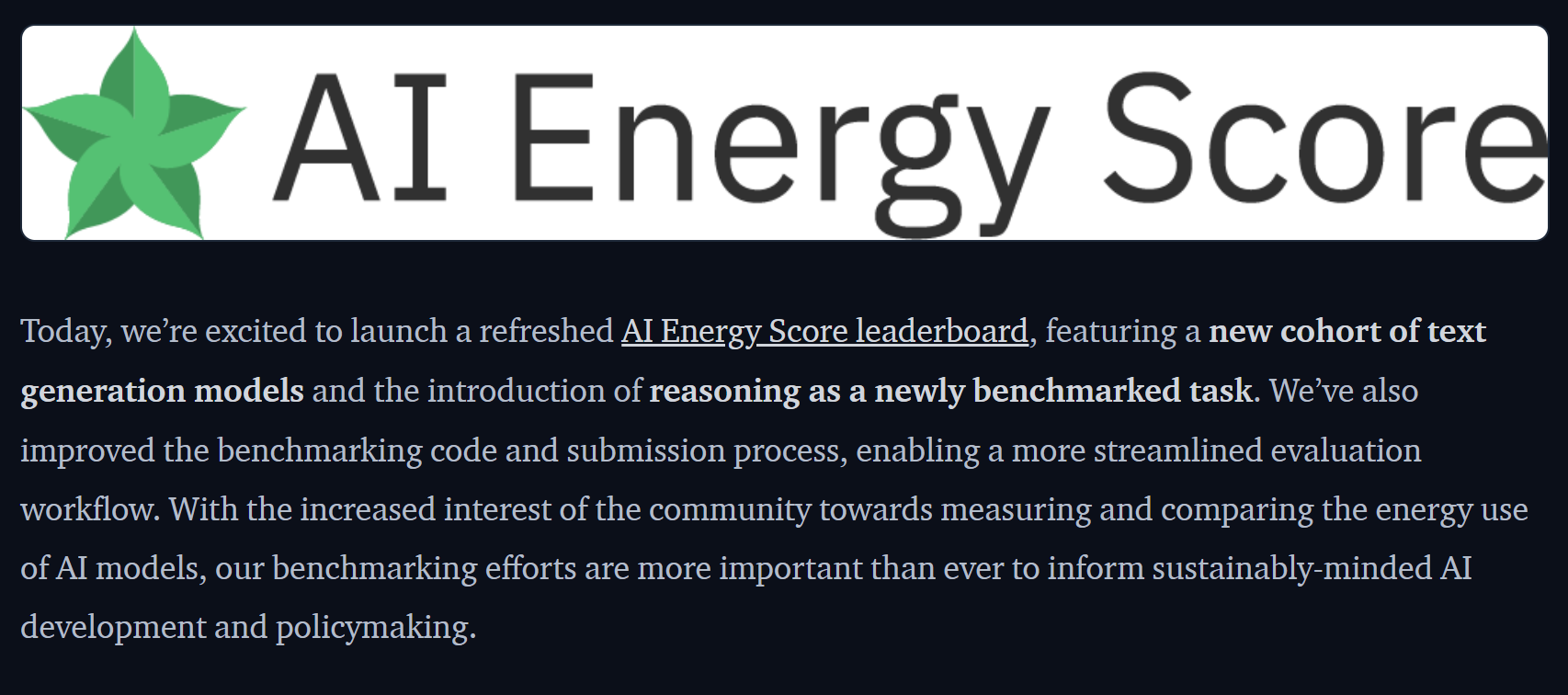

Neuralwatt helped power the AI Energy Score v2 refresh. In partnership with Hugging Face’s AI Energy Score team, Neuralwatt (via Scott Chamberlin) contributed to streamlining the benchmarking workflow and expanding the evaluation suite to support reasoning-model energy testing. This work also supported the creation of AI Energy Benchmarks, a new open-source package designed to make energy benchmarking more scalable and portable across different hardware/software configurations—helping the community better measure and compare the real-world energy cost of modern LLM inference, especially as reasoning modes become more common.

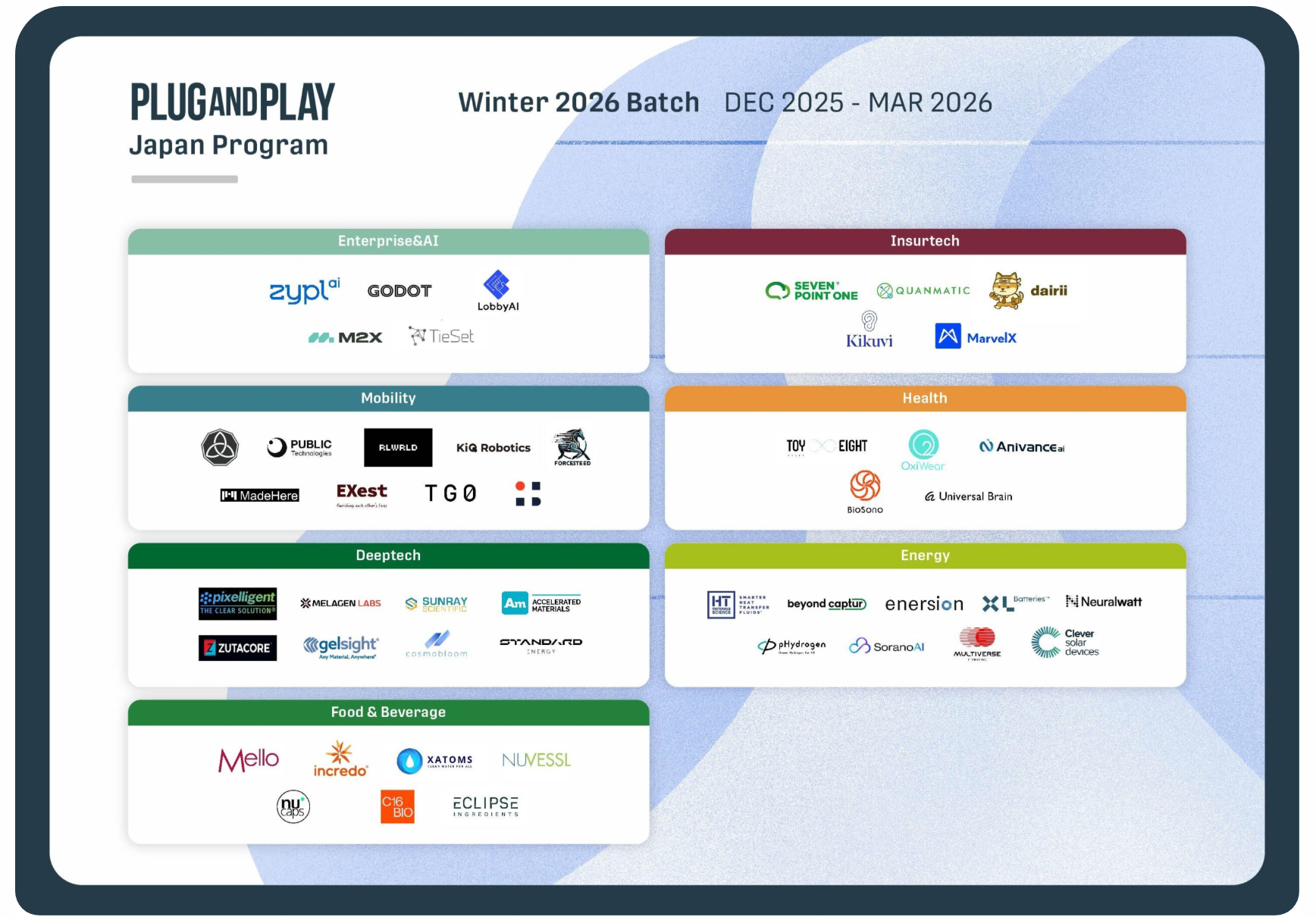

Neurwatt was selected to the Winter 2026 Plug and Play Accelerator program.

.png)

Neuralwatt, a pioneer in AI power management, boosted AI inference throughput by 33% and reduced GPU idle power by over 40% on Crusoe Cloud. Discover how Crusoe's cost-effective, climate-aligned, and technically open platform empowered their breakthrough in sustainable AI.

.png)

Last year, as we published the Cleantech 50 to Watch, the world stood on the edge of a U.S. presidential election, and with it, a great deal of uncertainty about the direction of policy and investment.

.png)

How software tools are improving data center speed-to-power while easing grid strain

.png)

In this episode of Environment Variables, host Chris Adams welcomes Scott Chamberlin, co-founder of Neuralwatt and ex-Microsoft Software Engineer, to discuss energy transparency in large language models (LLMs).

.png)

Hear from Lowercarbon Capital, Collaborative Fund, Alumni Ventures, New System Ventures, SOSV, Streetlife Ventures, DCVC, Pangaea Ventures, F(v) and more

Blog Posts

How to integrate Neuralwatt with your existing tools

Read More

What We Shared at ClawShop, and why AI's Hidden Costs are Finally Getting a Fix

Read More

Neuralwatt and GreenPT today announced a strategic partnership to set a new global standard for sustainable AI inference. The collaboration is designed as a direct response to this gap; introducing infrastructure where energy usage is measurable, transparent, and tied to how AI is actually consumed.

Read More.svg)